文章目录

- 1. 下载配置Embedding

- 2. 认识Embedding

- 3. 将词向量映射到低维空间

- 4. 基于TokenEmbedding的词袋模型

- 5. 构造Tokenizer

-

- 5.2 查看相似语句相关度

- 6. 使用可视化VisualDL查看句子张量

- 写在最后

本文基于百度飞浆Paddle平台

项目地址:

『NLP打卡营』实践课1:词向量应用演示

VisualDL官方说明文档

1. 下载配置Embedding

pip install --upgrade paddlenlp -i https://pypi.org/simple

Requirement already up-to-date: paddlenlp in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (2.1.1) Requirement already satisfied, skipping upgrade: jieba in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from paddlenlp) (0.42.1) Requirement already satisfied, skipping upgrade: h5py in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (from paddlenlp) (2.9.0) 。。。

from paddlenlp.embeddings import TokenEmbedding # 初始化TokenEmbedding,预训练embedding未下载时会自动下载并加载数据 token_embedding = TokenEmbedding(embedding_name="w2v.baidu_encyclopedia.target.word-word.dim300") # 查看token_embedding详情 print(token_embedding)

[2021-11-10 21:42:13,213] [ INFO] - Loading token embedding...

W1110 21:42:18.557029 1415 device_context.cc:447] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1

W1110 21:42:18.562464 1415 device_context.cc:465] device: 0, cuDNN Version: 7.6.

[2021-11-10 21:42:23,903] [ INFO] - Finish loading embedding vector.

[2021-11-10 21:42:23,906] [ INFO] - Token Embedding info:

Unknown index: 635963

Unknown token: [UNK]

Padding index: 635964

Padding token: [PAD]

Shape :[635965, 300]

Object type: TokenEmbedding(635965, 300, padding_idx=635964, sparse=False)

Unknown index: 635963

Unknown token: [UNK]

Padding index: 635964

Padding token: [PAD]

Parameter containing:

Tensor(shape=[635965, 300], dtype=float32, place=CUDAPlace(0), stop_gradient=False,

[[-0.24200200, 0.13931701, 0.07378800, ..., 0.14103900,

0.05592300, -0.08004800],

[-0.08671700, 0.07770800, 0.09515300, ..., 0.11196400,

0.03082200, -0.12893000],

[-0.11436500, 0.12201900, 0.02833000, ..., 0.11068700,

0.03607300, -0.13763499],

...,

[ 0.02628800, -0.00008300, -0.00393500, ..., 0.00654000,

0.00024600, -0.00662600],

[-0.01989385, -0.02005955, 0.01555019, ..., 0.00248810,

-0.02033536, -0.01693229],

[ 0. , 0. , 0. , ..., 0. ,

0. , 0. ]])

2. 认识Embedding

TokenEmbedding.shearch()获得指定词汇的词向量

test_token_embedding = token_embedding.search("中国")

print(test_token_embedding)

[[ 0.260801 0.1047 0.129453 -0.257317 -0.16152 0.19567 -0.074868 0.361168 0.245882 -0.219141 -0.388083 0.235189 0.029316 0.154215 -0.354343 0.017746 0.009028 0.01197 -0.121429 0.096542 0.009255 0.039721 0.363704 -0.239497 -0.41168 0.16958 0.261758 0.022383 -0.053248 -0.000994 -0.209913 -0.208296 0.197332 -0.3426 -0.162112 0.134557 -0.250201 0.431298 0.303116 0.517221 0.243843 0.022219 -0.136554 -0.189223 0.148563 -0.042963 -0.456198 0.14546 -0.041207 0.049685 0.20294 0.147355 -0.206953 -0.302796 -0.111834 0.128183 0.289539 -0.298934 -0.096412 0.063079 0.324821 -0.144471 0.052456 0.088761 -0.040925 -0.103281 -0.216065 -0.200878 -0.100664 0.170614 -0.355546 -0.062115 -0.52595 -0.235442 0.300866 -0.521523 -0.070713 -0.331768 0.023021 0.309111 -0.125696 0.016723 -0.0321 -0.200611 0.057294 -0.128891 -0.392886 0.423002 0.282569 -0.212836 0.450132 0.067604 -0.124928 -0.294086 0.136479 0.091505 -0.061723 -0.577495 0.293856 -0.401198 0.302559 -0.467656 0.021708 -0.088507 0.088322 -0.015567 0.136594 0.112152 0.005394 0.133818 0.071278 -0.198807 0.043538 0.116647 -0.210486 -0.217972 -0.320675 0.293977 0.277564 0.09591 -0.359836 0.473573 0.083847 0.240604 0.441624 0.087959 0.064355 -0.108271 0.055709 0.380487 -0.045262 0.04014 -0.259215 -0.398335 0.52712 -0.181298 0.448978 -0.114245 -0.028225 -0.146037 0.347414 -0.076505 0.461865 -0.105099 0.131892 0.079946 0.32422 -0.258629 0.05225 0.566337 0.348371 0.124111 0.229154 0.075039 -0.139532 -0.08839 -0.026703 -0.222828 -0.106018 0.324477 0.128269 -0.045624 0.071815 -0.135702 0.261474 0.297334 -0.031481 0.18959 0.128716 0.090022 0.037609 -0.049669 0.092909 0.0564 -0.347994 -0.367187 -0.292187 0.021649 -0.102004 -0.398568 -0.278248 -0.082361 -0.161823 0.044846 0.212597 -0.013164 0.005527 -0.004024 0.176243 0.237274 -0.174856 -0.197214 0.150825 -0.164427 -0.244255 -0.14897 0.098907 -0.295891 -0.013408 -0.146875 -0.126049 0.033235 -0.133444 -0.003258 0.082053 -0.162569 0.283657 0.315608 -0.171281 -0.276051 0.258458 0.214045 -0.129798 -0.511728 0.198481 -0.35632 -0.186253 -0.203719 0.22004 -0.016474 0.080321 -0.463004 0.290794 -0.003445 0.061247 -0.069157 -0.022525 0.13514 0.001354 0.011079 0.014223 -0.079145 -0.41402 -0.404242 -0.301509 0.036712 0.037076 -0.061683 -0.202429 0.130216 0.054355 0.140883 -0.030627 -0.281293 -0.28059 -0.214048 -0.467033 0.203632 -0.541544 0.183898 -0.129535 -0.286422 -0.162222 0.262487 0.450505 0.11551 -0.247965 -0.15837 0.060613 -0.285358 0.498203 0.025008 -0.256397 0.207582 0.166383 0.669677 -0.067961 -0.049835 -0.444369 0.369306 0.134493 -0.080478 -0.304565 -0.091756 0.053657 0.114497 -0.076645 -0.123933 0.168645 0.018987 -0.260592 -0.019668 -0.063312 -0.094939 0.657352 0.247547 -0.161621 0.289043 -0.284084 0.205076 0.059885 0.055871 0.159309 0.062181 0.123634 0.282932 0.140399 -0.076253 -0.087103 0.07262 ]]

cosine_sim()计算余弦相似度,语义相近更高说明表达能力更好

score1 = token_embedding.cosine_sim("女孩", "女人")

score2 = token_embedding.cosine_sim("女孩", "书籍")

print('score1:', score1)

print('score2:', score2)

score1: 0.7017183 score2: 0.19189896

通过上述分析可知,如果两个词语之间的语义更相近,则两个词语之间的向量距离会更短,对应的cos值会更高

3. 将词向量映射到低维空间

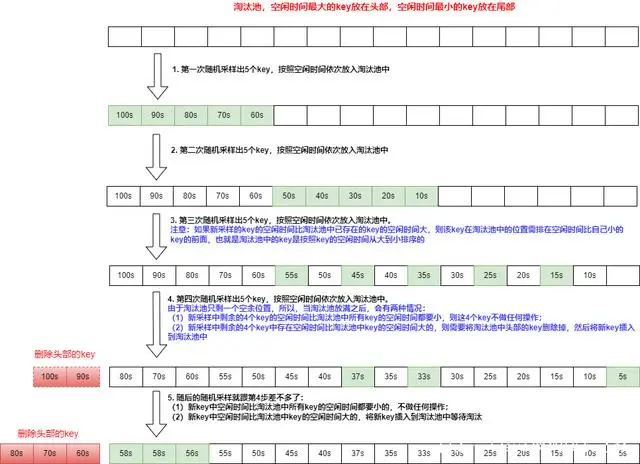

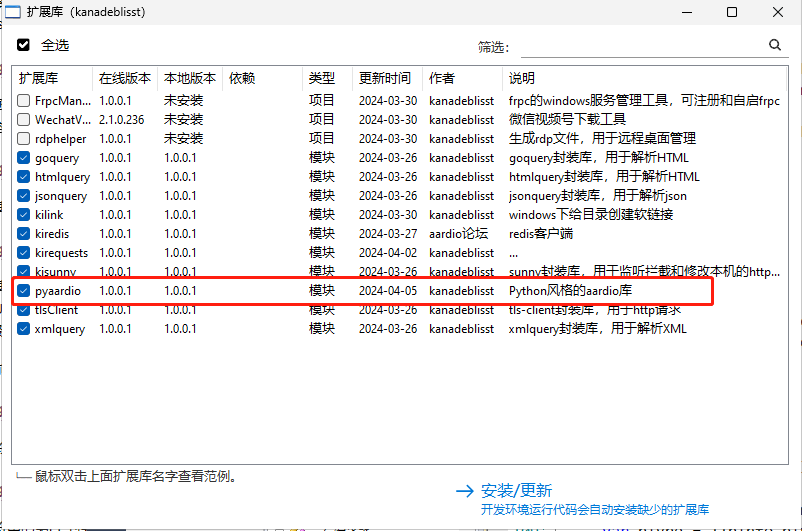

使用深度学习的VisualDL的High Dimensional组件可以队embedding 的结构进行可视化展示,首先,我们升级VisualDL到最新版本

pip install --upgrade visualdl

pip install --upgrade paddlenlp -i https://pypi.org/simple

# 获取词表中前1000单词

labels = token_embedding.vocab.to_tokens(list(range(0, 1000)))

# 取出这1000个单词对应的Embedding

test_token_embedding = token_embedding.search(labels)

# 引入VisualDL的LogWriter记录日志

from visualdl import LogWriter

with LogWriter(logdir='./token_hidi')as writer:

writer.add_embeddings(tag='test', mat = [i for i in test_token_embedding], metadata=labels)

打开VisualDL可视化界面如下

4. 基于TokenEmbedding的词袋模型

- paddlenlp.TokenEmbedding 组建word-embedding层

- paddlenlp.seq2vec.BoWENcoder 组建句子建模层

import paddle

import paddle.nn as nn

import paddlenlp

class BoWModel(nn.Layer):

def __init__(self, embedder):

super().__init__()

self.embedder = embedder

emb_dim = self.embedder.embedding_dim

self.encoder = paddlenlp.seq2vec.BoWEncoder(emb_dim)

self.cos_sim_func = nn.CosineSimilarity(axis=-1)

def get_cos_sim(self, text_a, text_b):

text_a_embedding = self.forward(text_a)

text_b_embedding = self.forward(text_b)

cos_sim = self.cos_sim_func(text_a_embedding, text_b_embedding)

return cos_sim

def forward(self, text):

# Shape: (batch_size, num_tokens, embedding_dim)

embedded_text = self.embedder(text)

# Shape: (batch_size, embedding_dim)

summed = self.encoder(embedded_text)

return summed

model = BoWModel(embedder=token_embedding)

5. 构造Tokenizer

使用TokenEmbedding词表构造Tokenizer

from data import Tokenizer # 分词器 # 注意data为手写的脚本 tokenizer = Tokenizer() tokenizer.set_vocab(vocab=token_embedding.vocab)

text_pairs = {}

with open('text_pair.txt', 'r', encoding = "utf8") as f:

for line in f:

text_a, text_b = line.strip().split("t")

if text_a not in text_pairs:

text_pairs[text_a] = []

text_pairs[text_a].append(text_b)

5.2 查看相似语句相关度

for text_a, text_b_list in text_pairs.items():

# 找a对应的词向量id

text_a_ids = paddle.to_tensor([tokenizer.text_to_ids(text_a)])

# 对于每个再b中的词语

for text_b in text_b_list:

# 找b中词语对应的词向量id

text_b_ids = paddle.to_tensor([tokenizer.text_to_ids(text_b)])

print("text_a: {}".format(text_a))

print("text_b: {}".format(text_b))

print("相似度为: {}".format(model.get_cos_sim(text_a_ids, text_b_ids).numpy()[0]))

print()

text_a: 多项式矩阵左共轭积对偶Sylvester共轭和数学算子完备参数解 text_b: 多项式矩阵的左共轭积及其应用 相似度为: 0.8861938714981079 text_a: 多项式矩阵左共轭积对偶Sylvester共轭和数学算子完备参数解 text_b: 退化阻尼对高维可压缩欧拉方程组经典解的影响 相似度为: 0.7975839972496033 text_a: 多项式矩阵左共轭积对偶Sylvester共轭和数学算子完备参数解 text_b: Burgers方程基于特征正交分解方法的数值解法研究 相似度为: 0.8188782930374146 text_a: 多项式矩阵左共轭积对偶Sylvester共轭和数学算子完备参数解 text_b: 有界对称域上解析函数空间的若干性质 相似度为: 0.8041478395462036 text_a: 多项式矩阵左共轭积对偶Sylvester共轭和数学算子完备参数解 text_b: 基于卷积神经网络的图像复杂度研究与应用 相似度为: 0.7444740533828735 text_a: 多项式矩阵左共轭积对偶Sylvester共轭和数学算子完备参数解 text_b: Cartesian发射机中线性功率放大器的研究 相似度为: 0.7536822557449341 text_a: 多项式矩阵左共轭积对偶Sylvester共轭和数学算子完备参数解 text_b: CFRP加固WF型梁侧扭屈曲的几何非线性有限元分析 相似度为: 0.7572889924049377 。。。(此处省略) text_a: 互联网企业互动问答社区产品盈利模式经营策略商业价值 text_b: 基于创新的中国广告产业演化研究 相似度为: 0.7780816555023193 text_a: 互联网企业互动问答社区产品盈利模式经营策略商业价值 text_b: 高管性别结构、内部制衡与企业技术创新——基于我国创业板上市企业的实证研究 相似度为: 0.7984799146652222 text_a: 互联网企业互动问答社区产品盈利模式经营策略商业价值 text_b: 环境扫描对企业竞争优势的影响研究--以电子信息行业为例 相似度为: 0.7848146557807922 text_a: 互联网企业互动问答社区产品盈利模式经营策略商业价值 text_b: 高管团队特征对公司绩效的影响——以我国新三板教育行业公司为例 相似度为: 0.8023167252540588 text_a: 互联网企业互动问答社区产品盈利模式经营策略商业价值 text_b: 国有润滑油企业市场开发策略研究 相似度为: 0.8262609243392944

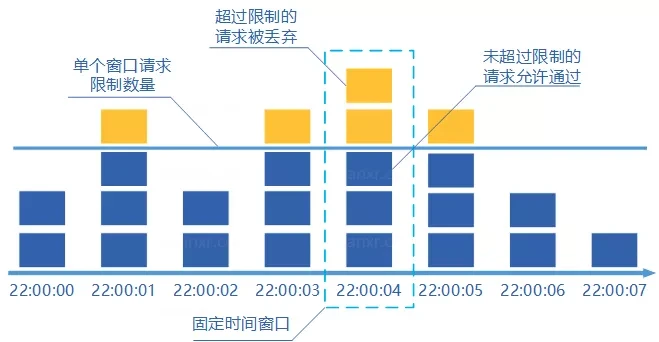

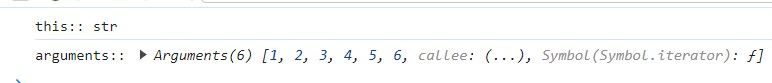

6. 使用可视化VisualDL查看句子张量

# 引入VisualDL的LogWriter记录日志

import numpy as numpy

from visualdl import LogWriter

# 获取句子以及对应的向量

label_list = []

embedding_list = []

for text_a, text_b_list in text_pairs.items():

text_a_ids = paddle.to_tensor([tokenizer.text_to_ids(text_a)])

embedding_list.append(model(text_a_ids).flatten().numpy())

label_list.append(text_a)

for text_b in text_b_list:

# 找句子b中对应每个词向量的id

text_a_ids = paddle.to_tensor([tokenizer.text_to_ids(text_b)])

embedding_list.append(model(text_b_ids).flatten().numpy())

label_list.append(text_b)

with LogWriter(logdir='./sentence_hidi') as writer:

writer.add_embeddings(tag = 'test', mat=embedding_list, metadata=label_list)

打开VisualDL界面如下:

写在最后

各位看官,都看到这里了,麻烦动动手指头给博主来个点赞8,您的支持作者最大的创作动力哟!

<(^-^)>

才疏学浅,若有纰漏,恳请斧正

本文章仅用于各位同志作为学习交流之用,不作任何商业用途,若涉及版权问题请速与作者联系,望悉知